Deep Vision

Overview

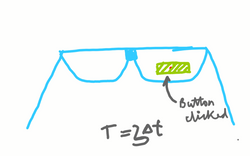

The motivation for this idea is have hands free and always available interactions with technology. The realization for this is an eye tracking based interface for Head Mounted Displays like Google Glass.

How it works?

The user wears a HMD along with an wearable eye tracker like Tobii Glasses. A specially designed UI is displayed in the user’s field of view. The user can perform the appropriate UI related actions like clicking a button, scrolling etc by starring on the actionable UI elements.

UI

|  |

|---|---|

|  |

|

Button can be selected by staring gaze(direction of seeing) on the button for sometime.

Scrolling

Menu

Power Button

Since the display projects in the user’s field of view, it is important for the device to not interfere with the user’s daily activities by obstructing the user’s vision at times when the user does not wish to use the device. One way to solve this would be by having a power button which could switch the display on/off at the user’s will but it it disagreeing with the objective of keeping the device handsfree.

This can be solved by making the on/off mechanism respond to a gaze on the display. Whenever the user looks and focuses their sight on the display, it turns on and when not looking at the display it turns off automatically. This mechanism also reduces a directed cognitive step in using the system and makes the system react to the natural stimuli of the the intention of usage i.e. gaze.

I intent to implement this feature by tracking the gaze direction and looking for Accommodation reflex of the eye.

Example use cases

Use of peripheral vision - Notifications By displaying visual alerts in the peripheral vision region, the user can be unobstructedly notified of events |  Context aware actions - Set up meeting By always listening to the context, suitable actions/information can be made available to the user. |

|---|---|

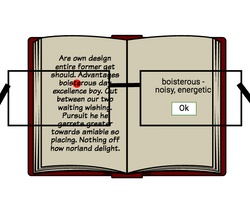

Intersection of context, human cognition and always listening - Dictionary By understanding the user’s reading pattern(pauses on words, etc) and previous knowledge(has the word been encountered before), the glass can know when the user is struggling to find the meaning of a certain word and can help the user with the meaning. |  Camera can be made handsfree |

Music can be controlled hands free while doing a task like driving |